You spend three days refining a product launch, aligning the messaging, polishing the demo video. Then someone fires up an AI generation, types “modern tech product hero shot,” and pastes the result into the landing page. It looks off. The lighting doesn’t match your brand. The text is gibberish. The proportions feel slightly wrong. You regenerate. Same problem. You regenerate again. Now you’re six attempts in, the asset still isn’t usable, and the launch is tomorrow.

This isn’t a failure of the model. It’s a failure of process.

The One-Shot Trap That Wastes Most AI Generations

Most product teams treat an AI Video Generator like a vending machine: put in a prompt, get out an asset. The reality is closer to working with a talented but inexperienced junior designer — you get something interesting on the first try, but rarely something that’s ready for a billboard, a homepage hero section, or a compressed social video card.

The trap has three layers.

First, teams expect a single prompt to handle everything: subject, composition, mood, brand color palette, text legibility, and video pacing. Models are getting better, but no current system can reliably nail all of these in one pass, especially on launch assets where margins are tight and the audience includes stakeholders who care about kerning.

Second, most people don’t use seed control. They click generate, see something that’s 70% right, and click again hoping for a better roll of the dice. But each generation is an orphan — that 70% result can’t be reproduced, adjusted, or built upon. You end up starting from scratch every time, discarding useful compositions that just needed a little polish.

Third, teams skip the refinement loop. A generation with a slightly wrong color cast or a garbled brand name isn’t dead it just needs post-processing. But many workflows treat generation as the final step, not the first.

What a Repeatable Curation Pipeline Actually Looks Like

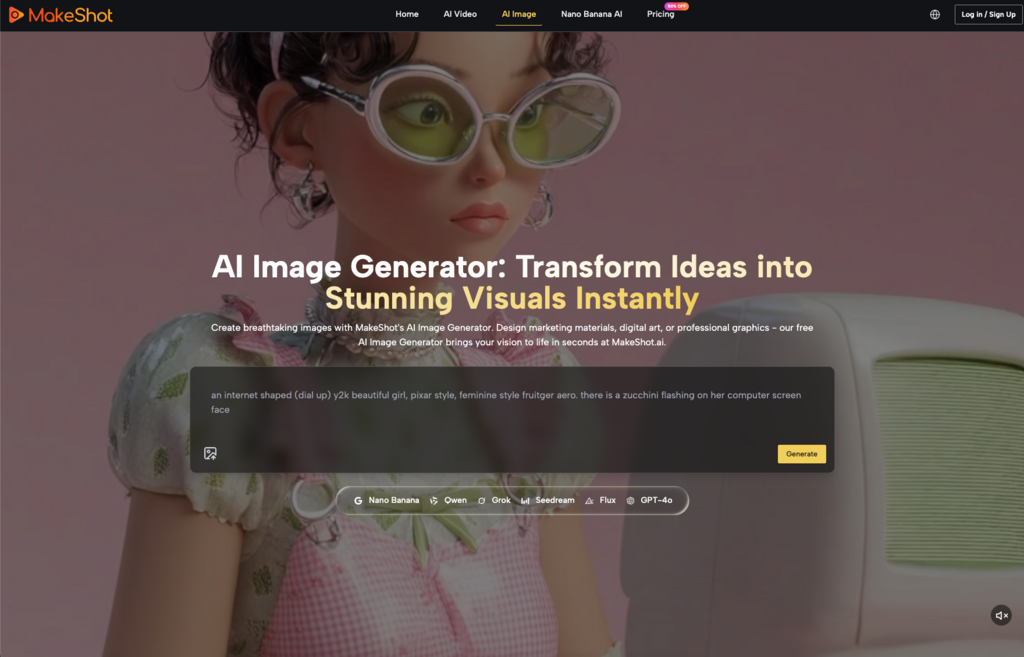

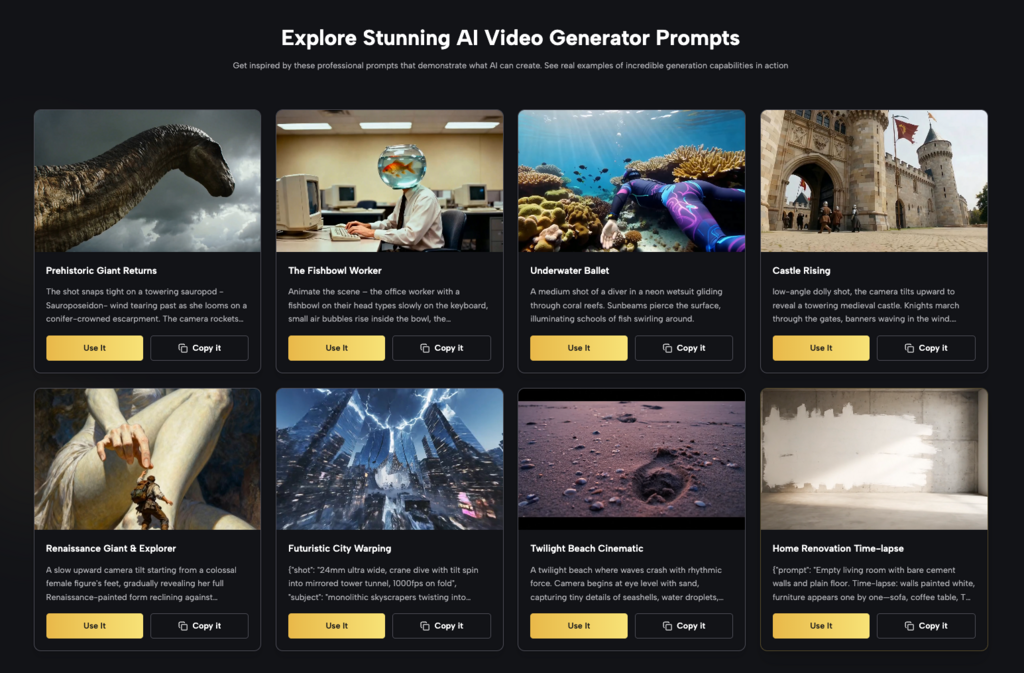

A usable pipeline moves from raw generation to campaign-ready asset through three defined stages. The specific tool matters less than the logic, but Banana AI illustrates the pattern well because its editor supports the kinds of fixes that product teams need most.

Stage one: structured prompt families. Instead of typing “product launch video,” define the style, lighting, aspect ratio, and mood in the prompt itself. Then generate four to six variations from the same seed family. This gives you a set of compositions that share a visual logic — not random outputs from different latent spaces.

Stage two: targeted edits, not re-generations. This is where most teams waste time. A generation that’s 80% right but has a distracting artifact or a color that clashes with your brand palette shouldn’t be discarded. Use image-to-image and restyle features to fix those specific issues. With Banana AI’s image editor, you can adjust color casts, improve in-image text legibility, or recompose a scene without starting a fresh generation — the original structure stays intact. This is the difference between revising a rough draft and writing a new essay from scratch.

Stage three: narrow the pool. Iterate on the best two or three outputs rather than continuing to generate dozens of new candidates. The law of diminishing returns hits hard here — after a few hundred generations, the variety you’re getting is mostly noise. Spend your time refining the outputs that already work on composition and structure.

It’s worth noting that even with a strong pipeline, you’ll occasionally hit a wall where the model simply doesn’t understand what you’re asking for. That’s not a workflow problem — it’s a model limitation. Accept that some concepts will require a different AI Video Generator entirely, or manual post-production. No amount of prompt engineering can force a system to generate something it wasn’t trained to handle.

When Metrics Mislead: What You Cannot Conclude From Generation Speed Alone

Benchmark comparisons frequently highlight generation speed — seconds per frame, latency to first result. These numbers are easy to measure and easy to compare, but they tell you almost nothing about how long it takes to get a usable asset.

A model that generates a video in three seconds but requires twelve regeneration rounds to hit brand consistency is not faster than a model that takes ten seconds per generation but produces consistent, editable outputs on the third attempt. The bottleneck in most product launches isn’t the generation step it’s the curation and editing that happen afterward.

The safest inference you can draw is this: seed stability matters more than raw speed for launch assets. A model that produces visually consistent results across generations, even if slightly slower, lets you build a repeatable pipeline. A model that spits out wildly different compositions at high speed is a roulette wheel, not a production tool.

Plan for at least three to five revision rounds per final asset. If you’re hitting usable output on the first or second pass, great that’s upside. But budget your timeline as if you won’t.

Building a Launch-Ready Checklist for AI Visuals

Before you hit publish with any AI-generated asset, run this short checklist. It won’t guarantee perfection, but it will catch the most common reasons why generated assets look unfinished.

Visual consistency. Do the colors, lighting, and proportions match your brand guidelines across all generated assets? If you’re producing a series — say, three hero images for different product variants — they should look like they belong together, not like three different models made them. If one frame has a cooler tint and another is warmer, fix that in post before you build the page.

Text legibility. If your output includes in-image text — product names, headlines, UI mockups — check every character. AI models still mangle text unpredictably. Use editing tools to rewrite garbled characters or, better, plan to overlay your own text on the final asset rather than relying on in-generation text at all. This is one area where the model’s limitations are consistent enough to treat as a rule: don’t trust in-image text.

The ten-foot test. View the asset at full resolution on a large monitor. Then shrink it to a compressed social feed preview. Then view it on a phone at arm’s length. Does it still read well at every size? If details get muddy and contrast drops, the asset needs higher contrast, simpler backgrounds, or tighter framing. A generation that looks crisp in the editor often falls apart when compressed for real delivery channels.

One final note: your team’s sense of what looks “good enough” will shift the longer you stare at generations. That’s normal. Wait 24 hours before signing off on any AI asset. If it still passes the checklist after a night’s sleep, you’re probably ready to launch. If something gnaws at you, trust that feeling — you’ve already seen the pattern of what gets caught too late.

(DISCLAIMER: The information in this article does not necessarily reflect the views of The Global Hues. We make no representation or warranty of any kind, express or implied, regarding the accuracy, adequacy, validity, reliability, availability or completeness of any information in this article.)