The hardest part of making music is not the tools—it is the distance between an idea and something you can actually hear. Most people stop before they begin, not because they lack imagination, but because the process feels too technical, too slow, or too uncertain. This gap is exactly where an AI Music Generator begins to matter.

Instead of asking users to learn production software, instrument theory, or audio engineering, the system reframes music creation as a language problem. You describe what you want, and the system attempts to interpret and materialize it. In my experience, this shift does not eliminate effort, but it redistributes it—from technical execution to conceptual clarity.

What becomes interesting is not just that music can be generated, but how this changes the starting point of creativity itself.

How Language Becomes Musical Structure Internally

At a surface level, text-to-music sounds simple. But underneath, several transformations are happening.

From Descriptive Words to Acoustic Parameters

When you input something like “soft piano with emotional tone,” the system does not treat it as abstract poetry. It maps:

- “soft” → lower velocity, reduced dynamic range

- “piano” → specific instrument timbre

- “emotional” → slower tempo, minor key tendencies

This translation layer is where most of the perceived intelligence comes from.

From Parameters to Compositional Logic

Once interpreted, the system assembles:

- chord progressions

- melodic contours

- rhythmic patterns

These are not random. They follow patterns learned from large-scale musical data.

From Structure to Final Audio Output

Finally, everything is rendered into a waveform:

- instrument layers are stacked

- vocal synthesis may be applied

- the result is exported as a complete track

What users receive is not a sketch, but something that feels finished.

What Actually Happens When You Use It

The workflow is surprisingly short, but each step carries weight.

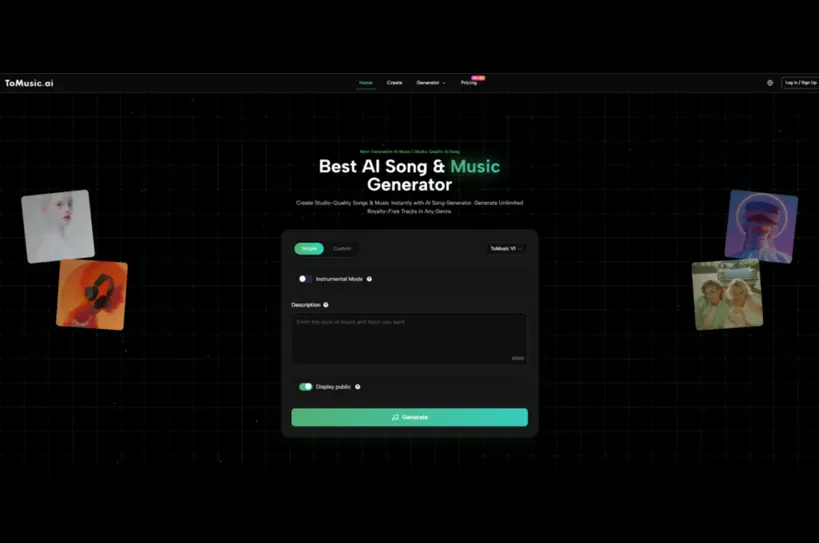

Step One Define Musical Intent Clearly

You either:

- write a short descriptive prompt

- or input structured lyrics

The more specific the description, the more predictable the output becomes.

Step Two Adjust Core Musical Constraints

You can typically choose:

- style (pop, cinematic, lo-fi)

- mood (dark, uplifting, calm)

- presence of vocals

These are not just filters—they influence the internal generation path.

Step Three Generate And Compare Variations

The system produces multiple versions. In practice:

- results vary significantly even with the same prompt

- selecting is part of the process

- iteration improves alignment

This makes the workflow closer to “curation” than “construction.”

What Feels Different Compared To Traditional Production

The difference is less about speed and more about direction.

Traditional Workflow

- idea → technical execution → refinement → output

AI-Assisted Workflow

- idea → multiple outputs → selection → refinement

The inversion is subtle but important. You are no longer building from zero—you are choosing from possibilities.

Comparison With Conventional Music Creation Methods

| Aspect | Traditional Production | AI-Based Generation |

| Skill Requirement | High (DAW, theory) | Low to moderate |

| Time To First Output | Hours to days | Minutes |

| Iteration Cost | High | Low |

| Creative Control | Precise but manual | Indirect via prompts |

| Output Variability | Limited by effort | High by default |

This comparison is not about superiority. It shows a shift in how effort is distributed.

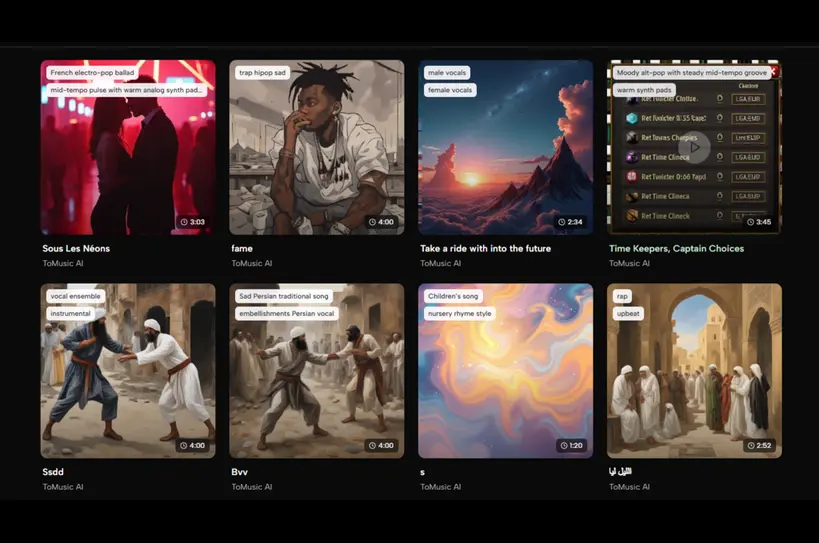

Where This Approach Works Particularly Well

Content Creation Environments

Short-form video creators often need:

- fast turnaround

- unique background music

- consistent tone

This workflow aligns naturally with those needs.

Early-Stage Idea Exploration

Instead of imagining how something might sound, you can:

- generate multiple interpretations

- evaluate direction quickly

This reduces uncertainty in early creative phases.

Non-Musicians With Strong Narrative Ideas

People who think in stories rather than sound can:

- express emotional intent

- rely on the system for execution

This opens a previously closed domain.

Limitations That Become Noticeable Over Time

No system fully replaces intentional composition.

Prompt Sensitivity

Small wording changes can produce large differences. This makes:

- consistency harder

- repeatability less predictable

Structural Control Is Indirect

You cannot always specify:

- exact chord changes

- precise arrangement timing

Control exists, but it is mediated through language.

Quality Requires Iteration

In my testing, the first result is rarely the best. It often takes:

- several generations

- minor prompt adjustments

before something feels usable.

Why This Matters Beyond Music Tools

The deeper implication is not about music—it is about interfaces.

We are moving from:

- tool-based creation (buttons, timelines)

to:

- intent-based creation (language, description)

Music is just one example. The same pattern is appearing in:

- image generation

- video synthesis

- code generation

What changes is not just output speed, but how humans think about making things.

A Subtle Shift In Creative Ownership

When you generate rather than construct, authorship becomes layered:

- you define intent

- the system defines execution

- you select outcomes

This creates a hybrid form of authorship that feels different from traditional creation.

It is neither fully manual nor fully automated.

Where This Might Be Going Next

Based on current behavior, future systems may:

- allow finer structural control through language

- maintain consistency across multiple generations

- integrate editing rather than full regeneration

If that happens, the gap between idea and production may shrink even further.

What Remains Constant Despite The Change

Even with automation, some things do not change:

- clarity of intent still matters

- taste still determines selection

- iteration is still required

The tool changes the path, but not the need for judgment.

A Practical Way To Think About It

Instead of asking whether this replaces music production, it may be more useful to see it as:

- a fast prototyping layer

- a creative amplifier

- a translation system between language and sound

In that sense, the real value is not the music itself, but the reduction of friction between imagination and output.

(DISCLAIMER: The information in this article does not necessarily reflect the views of The Global Hues. We make no representation or warranty of any kind, express or implied, regarding the accuracy, adequacy, validity, reliability, availability or completeness of any information in this article.)